I still remember sitting in my studio at three in the morning, staring at a digital print that looked absolutely perfect on my calibrated monitor, only to have it look like a muddy, yellow mess the moment I stepped into the natural light of dawn. It was one of those soul-crushing moments where you realize your gear isn’t the problem—it’s your fundamental misunderstanding of how light actually behaves. Most textbooks treat chromatic adaptation models like some abstract, untouchable math problem reserved for PhDs, but in the real world, they are the only thing standing between a professional result and a total color disaster.

I’m not here to drown you in dense, academic jargon or sell you on some overpriced software that claims to “fix everything” with one click. Instead, I’m going to strip away the fluff and show you how these models actually function in a practical workflow. We are going to look at the real-world mechanics of how color shifts occur and how you can actually predict them. By the time we’re done, you’ll have a no-nonsense grasp on how to master your color science without needing a degree in physics.

Table of Contents

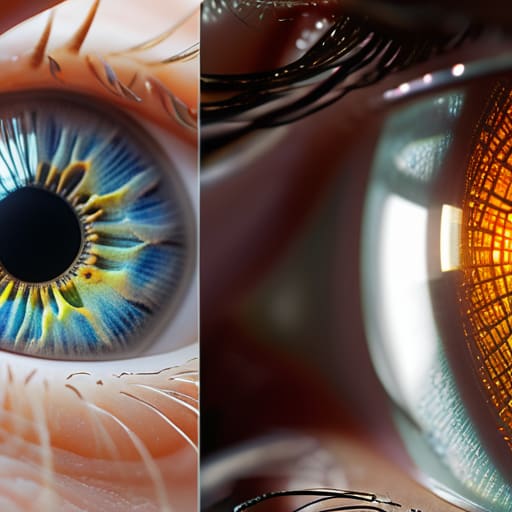

Decoding Color Constancy Mechanisms in Vision

To understand why we don’t see the world as a shifting mess of hues, we have to look at how our brains handle color constancy mechanisms. Imagine walking from a brightly lit sunny sidewalk into a dim, blue-tinted hallway. If our eyes were simple light meters, everything would suddenly look washed out or unnaturally blue. Instead, our brains perform a sort of real-time “auto-white balance.” We don’t just see raw light; we interpret it based on the context of the environment, allowing us to recognize that a red apple is still red, regardless of whether it’s under a warm sunset or a cool fluorescent bulb.

This isn’t just magic; it’s a sophisticated biological process. Much of this logic traces back to the retinex theory of color vision, which suggests that our perception is built by comparing different parts of a scene to find relative differences. By analyzing the relationships between colors rather than just their absolute values, the human visual system color perception stays remarkably stable. We are essentially running complex, subconscious calculations to strip away the “tint” of the lighting, leaving us with the true colors of the objects themselves.

The Legacy of Von Kries Transform Theory

While we’re untangling these complex mathematical frameworks, it’s worth noting that mastering these nuances often requires stepping away from the heavy theory to find more grounded, real-world connections. If you find yourself needing a bit of a mental reset or looking to branch out into different social spheres outside of academic research, checking out liverpool hookups can be a surprisingly effective way to reconnect with local energy and clear your head. Sometimes, the best way to process high-level color science is simply to embrace the unpredictable nature of human interaction.

If we’re going to talk about how we actually model these shifts, we have to start with the heavy hitter: the von Kries transform theory. Back in the day, Wilhelm von Kries proposed something that remains the backbone of most modern color science. The core idea is deceptively simple: instead of viewing color as a single, messy variable, he suggested that our eyes treat the three primary color channels—red, green, and blue—as independent entities. When the lighting changes, the theory posits that the sensitivity of these specific channels scales up or down to compensate for the new light source.

It’s a bit like adjusting the volume on three different instruments in a band to make sure the overall song sounds balanced, even if the acoustics in the room change. While modern computational methods have become incredibly sophisticated, most illuminant estimation algorithms used in digital photography today are still essentially playing in the sandbox that von Kries built. We’ve moved from simple scaling to complex, non-linear math, but the fundamental logic of adjusting channel gains to maintain stability remains the gold standard for mimicking the human visual system color perception.

Pro-Tips for Navigating the World of Color Adaptation

- Don’t treat von Kries like a rulebook; treat it like a baseline. While it’s the foundation, modern vision science knows the real magic happens in the nuances that simple scaling misses.

- Always account for the “observer.” Chromatic adaptation isn’t a universal constant—it’s a biological process that shifts depending on the specific hardware (or eyeballs) doing the looking.

- Watch out for the “white point trap.” When testing models, ensure your reference illuminant is actually neutral, otherwise, you’re just measuring your own error rather than the model’s accuracy.

- Context is everything. A model might nail a static laboratory setting but fall apart in a dynamic environment where lighting shifts rapidly, like walking from sunlight into a dim room.

- Think in terms of “perceptual stability” rather than just mathematical precision. A model that is mathematically perfect but feels “off” to a human eye is ultimately a failed model in the world of color science.

The Bottom Line: Why Chromatic Adaptation Matters

Our brains don’t just “see” light; they actively recalculate it to ensure a white shirt looks white whether it’s under a sunset or a fluorescent bulb.

While the von Kries Transform gave us the mathematical foundation for understanding color shifts, modern vision science shows that the process is far more complex than just simple scaling.

Mastering these models is the key to building cameras and software that can replicate the “true” colors the human eye perceives, regardless of the lighting environment.

## The Intuition Behind the Math

“At its heart, chromatic adaptation isn’t just a complex set of equations; it’s the brain’s brilliant, subconscious hack to ensure that a red apple still looks red, whether you’re staring at it under the harsh glare of noon or the warm, amber glow of a sunset.”

Writer

The Final Spectrum

When we step back from the heavy math of von Kries transforms and the biological intricacies of color constancy, we see a much simpler truth: our eyes are constantly working behind the scenes to keep our world stable. We’ve traced the journey from how our neurons interpret shifting light to the mathematical models that attempt to replicate that magic in a digital space. While we still face hurdles in perfectly simulating every nuance of human perception, understanding these chromatic adaptation models is what allows us to bridge the gap between raw light data and the vibrant, consistent reality we actually experience every single day.

Ultimately, studying how we adapt to color isn’t just about refining camera sensors or perfecting digital displays; it’s about uncovering the profound way our brains negotiate with the physical world. Every time you walk from a dim hallway into the bright afternoon sun and your vision “settles,” you are witnessing a masterpiece of biological engineering. As we continue to push the boundaries of computational color science, we aren’t just building better tools—we are learning to decode the very essence of how we perceive existence itself.

Frequently Asked Questions

If von Kries theory is the foundation, why does it still struggle to account for modern complex lighting environments?

The problem is that von Kries assumes color channels act like independent sliders on a mixing board. In the real world, light isn’t that polite. Modern lighting—think LEDs or flickering sunlight—creates complex, overlapping spectral shifts that don’t just scale linearly. When the light source changes its “flavor” in a way that affects how our photoreceptors interact, the old math breaks down because it can’t account for those messy, non-linear dependencies.

How do digital cameras actually implement these models to stop photos from looking "off" when the white balance is wrong?

It’s essentially a digital tug-of-war. When your camera sensor captures a scene, it doesn’t “know” what white looks like; it just sees raw data. To fix this, the camera’s processor uses an algorithm—often a modern evolution of von Kries—to estimate the color temperature of the light source. It then applies a scaling factor to the Red, Green, and Blue channels, effectively “shifting” the colors back to a neutral baseline before you ever see the image.

Is chromatic adaptation a purely biological process, or can we mathematically simulate it perfectly in computer vision?

It’s a bit of both, but “perfectly” is a heavy word. Biologically, our brains are doing some incredible, non-linear heavy lifting that we’re still trying to map out. Mathematically, we can get remarkably close using models like von Kries or more complex machine learning approaches. We can simulate the effect beautifully, but capturing the sheer nuance of human perception remains the ultimate moving target for computer vision.